This is an important consideration if you're planning toĭeploy PyTorch trained models using ONNX or tensorflow at a later stage.Īs to why this exists, my guess is that developers of TorchScript decided that nn.Sequential is sufficient and did not see a reason to implement support for Compose, but torchvision developers haven't removed the module for backward compatibility. The documentation is trying to say that if you use Compose and serialize (script) the model to TorchScript, the resulting model will not work correctly. This is useful in cases you want to run PyTorch models outside Python, and is also commonly used for conversions to other ML frameworks. Serializing a model to a TorchScript representation. nn.Upsample() is depecated in pytorch version > 0.4.0 in favor of nn.functional.interpolate() I’m not able to use interpolate() inside nn. This is an important consideration if youre planning to deploy PyTorch trained models using ONNX or tensorflow at a later stage. pt file containing a state_dict, you need access to the python source to initialize and run anything. That is, it contains all necessary information to execute a model.

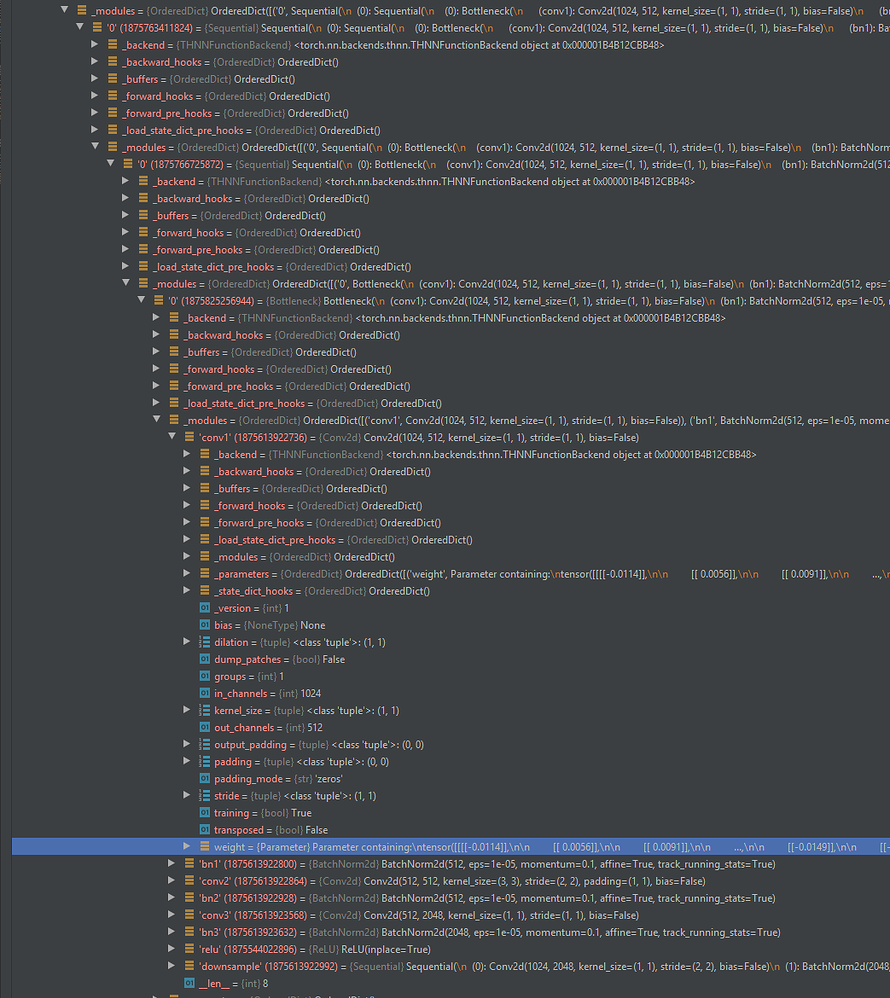

For your Flatten layer, it seem to work fine no import torch from torch import nn class Flatten (nn.Module): def forward (self, input): ''' Note that input.size (0) is usually the batch size. In short, this intermediate representation is a serialized nn.Module, containing all weights and control flows. Because the Sequential only passes the output of the previous layer. What does this mean? TorchScript defines an intermediate representation for a PyTorch model. In order to script the transformations, use torch.nn.Sequential Relevant lines from the documentation are This transform does not support torchscript. Yes, there is a good reason not to use Compose. And it could handle multiple inputs/outputs only need the number of outputs from the previous layer equals the number of inputs from the next layer. values (): input module ( input ) return input.

EDIT: As was pointed out in the comments, this does not answer the original question. Hi, maybe I’m missing sth obvious but there does not seem to be an append() method for nn.Sequential, cos it would be handy when the layers of the sequential could not be added at once. Sequential ): def forward ( self, input ): for module in self.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed